Aerospace Certification Standards Comparison: Where Compliance Delays Usually Begin

Lead Author

Published

Views:

Aerospace Certification standards comparison often reveals that compliance delays rarely start at final approval—they begin much earlier in requirement mapping, test planning, and documentation control. For quality and safety leaders navigating FAA, EASA, and cross-sector standards, understanding where these gaps emerge is essential to reducing risk, avoiding rework, and accelerating program readiness.

What Aerospace Certification Standards Comparison Really Means

For quality control teams and safety managers, Aerospace Certification standards comparison is not simply a side-by-side reading of regulations. It is the structured analysis of how different authorities, guidance documents, test expectations, and evidence models affect design assurance, verification strategy, supplier control, and release readiness. In practice, comparison work often spans FAA and EASA frameworks, but it also touches adjacent standards, such as ISO-based quality systems, environmental qualification standards, software assurance, and sector-specific safety methods used in advanced transportation.

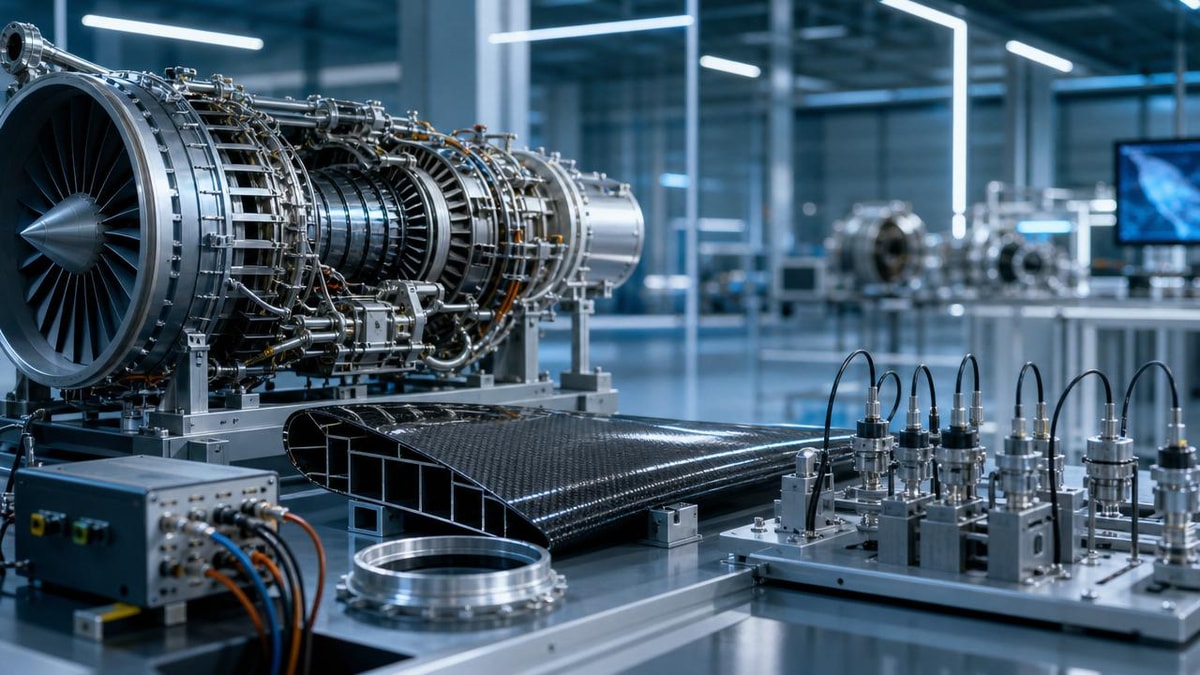

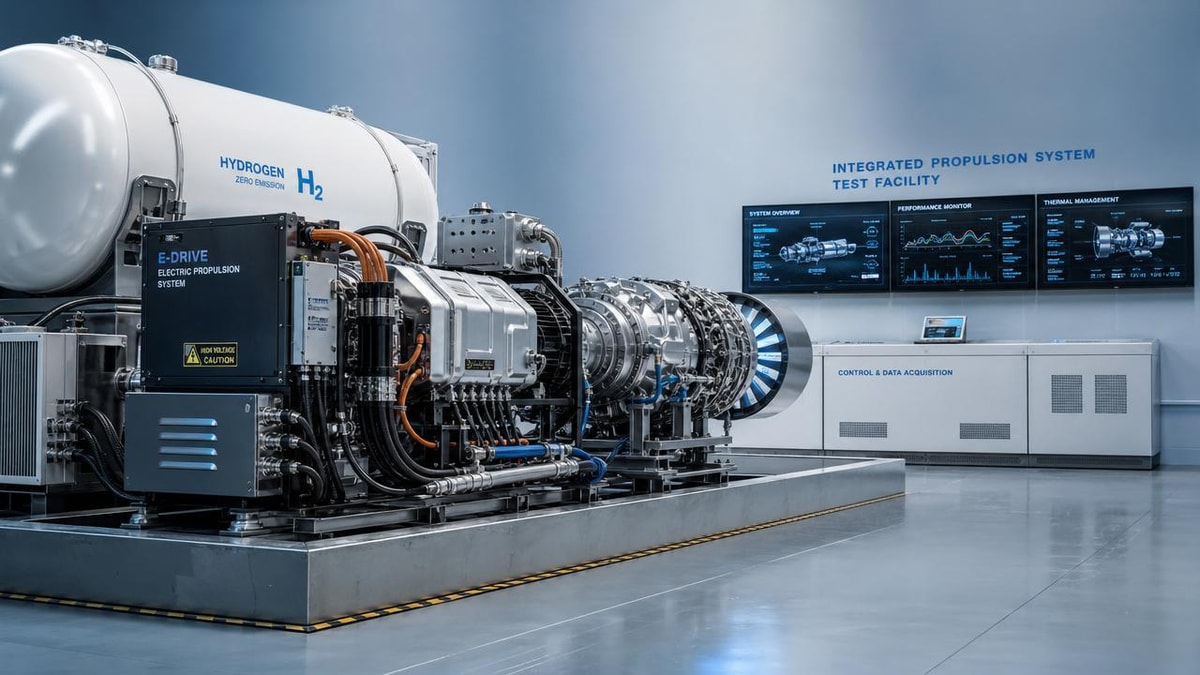

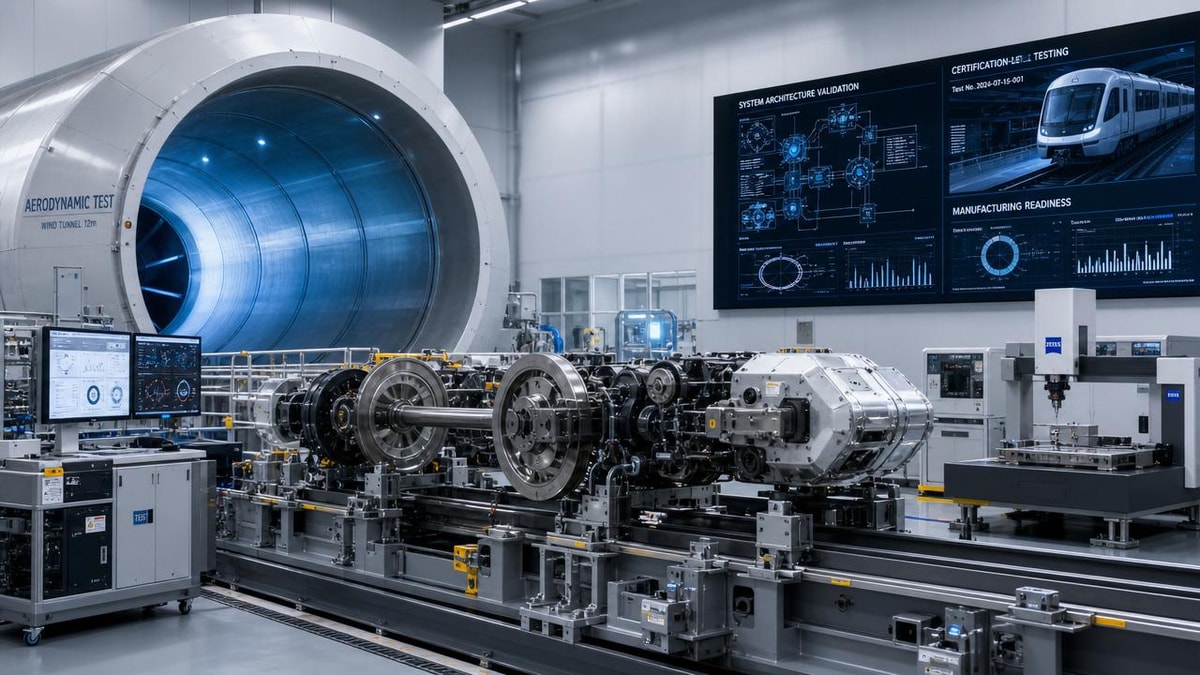

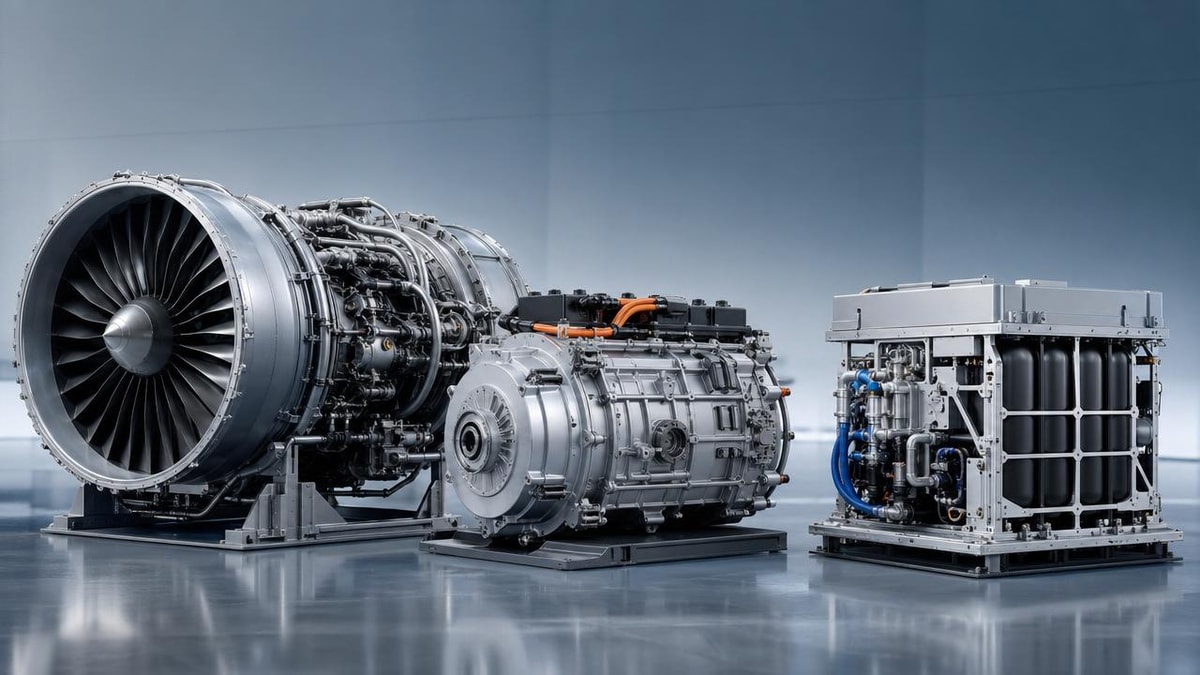

This matters more today because aerospace programs increasingly overlap with emerging mobility sectors. Urban air mobility, autonomous systems, electrified propulsion, satellite-enabled navigation, and high-speed transportation platforms are forcing organizations to reconcile legacy certification logic with new architectures. Institutions such as G-AIT sit at the intersection of these domains, helping engineering and assurance leaders benchmark technical innovation against compliance reality. That is why an Aerospace Certification standards comparison should begin at the system-definition stage, not after testing is already underway.

Why the Industry Pays Close Attention to Early Compliance Gaps

The aerospace sector operates under an unforgiving rule: evidence created late is usually expensive evidence. When organizations discover misaligned assumptions between standards after design freeze, every correction affects schedule, cost, traceability, and sometimes safety cases. Many delays that appear to be “certification bottlenecks” are actually program-management issues rooted in unclear requirement ownership or incomplete verification planning.

An effective Aerospace Certification standards comparison helps teams answer several early questions. Which requirements are mandatory versus advisory? Which test conditions differ by authority? What level of supplier evidence is acceptable? How must software, hardware, structures, human factors, and maintenance data be linked in the compliance package? For quality and safety leaders, these questions determine whether a program moves smoothly toward conformity or accumulates hidden debt that surfaces during audits, witnessing, or approval reviews.

A Practical Industry Overview for Quality and Safety Leaders

The table below provides a practical Aerospace Certification standards comparison view across major domains that frequently shape compliance planning in conventional and advanced mobility programs.

This overview shows that delays usually do not originate from a single failed audit. They arise when requirements, test conditions, and evidence ownership are fragmented across engineering, quality, procurement, and certification teams. A strong Aerospace Certification standards comparison turns these fragmented issues into a coherent control framework.

Where Compliance Delays Usually Begin

Although each program has its own technical profile, most recurring delays begin in five predictable areas.

1. Requirement mapping that is broad but not actionable

Teams often create high-level compliance matrices that list applicable rules without translating them into system-level, item-level, and verification-level obligations. This creates false confidence. By the time design reviews occur, unresolved interpretations multiply. In an Aerospace Certification standards comparison, the real value comes from decomposing each requirement into measurable acceptance criteria, responsible owners, and expected evidence forms.

2. Test planning that starts after design maturity assumptions are locked

Many organizations treat test planning as a downstream activity. That is risky. If environmental qualification, structural substantiation, software coverage, or failure-condition assessments are not aligned early, later test campaigns may prove incomplete or non-representative. The result is retesting, re-analysis, or authority questions that delay closure.

3. Documentation control that lags behind engineering change

Documentation is not administrative overhead; it is part of the evidence chain. Safety managers frequently see the same problem: hardware changes, software revisions, supplier substitutions, or process updates occur faster than compliance records are updated. When the certification package is reviewed, document baselines no longer match the tested configuration. This is one of the most common causes of approval friction.

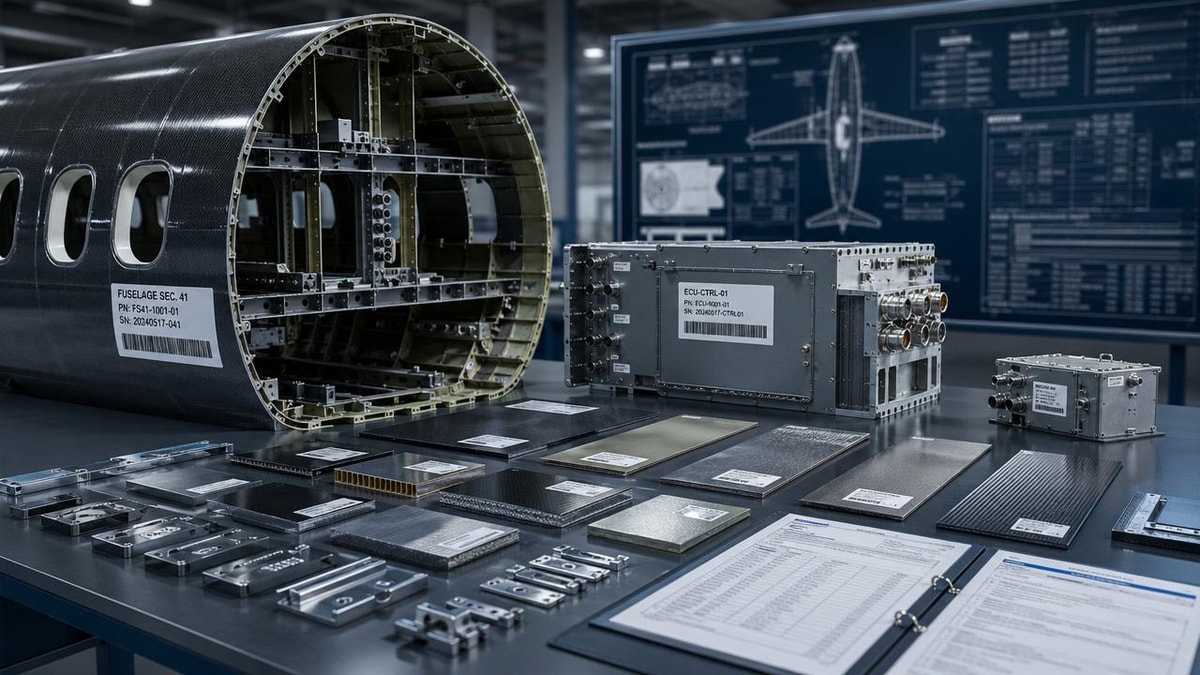

4. Supplier evidence accepted without authority-level scrutiny

Advanced aerospace and transportation programs rely heavily on specialized suppliers for composites, avionics, batteries, propulsion modules, software components, and safety-critical processes. However, supplier documentation may be technically strong yet still insufficient for the intended certification path. Aerospace Certification standards comparison should therefore include acceptance rules for external evidence, not just internal deliverables.

5. Cross-functional ownership that is assumed rather than assigned

One of the quietest sources of delay is unclear ownership between engineering, quality, safety, and program management. If no one owns the closure logic for a requirement, everyone assumes someone else does. In regulated programs, ambiguity converts directly into schedule risk.

Why Standards Comparison Has Broader Value Beyond Approval

A mature Aerospace Certification standards comparison is not only about passing authority review. It also improves internal governance. For quality personnel, it sharpens audit readiness and reduces nonconformance recurrence. For safety leaders, it strengthens hazard traceability and verification credibility. For executive and program stakeholders, it provides earlier visibility into schedule threats, evidence gaps, and supplier readiness.

This broader value is especially important in integrated mobility environments such as those monitored by G-AIT. Programs involving eVTOL systems, advanced airframes, space-adjacent platforms, or autonomous transport controls often combine aerospace expectations with new operational models. Standards comparison helps organizations avoid treating innovation as an excuse for weak assurance discipline. Instead, it creates a benchmarked pathway where advanced technology and compliance maturity evolve together.

Typical Program Types That Benefit Most

Not every program experiences the same level of certification complexity. The following categories typically gain the most practical value from early Aerospace Certification standards comparison.

Practical Suggestions for Quality Control and Safety Management Teams

To make Aerospace Certification standards comparison operational rather than theoretical, organizations should embed it into routine controls.

- Create a living compliance map that links each requirement to design features, test methods, analyses, documents, and responsible owners.

- Review verification plans before major design commitments, not after procurement and fabrication have started.

- Apply document baseline discipline to every engineering change, especially where tested articles, software loads, and supplier parts are involved.

- Define evidence acceptance criteria for suppliers, including format, traceability, configuration status, and witness expectations.

- Use cross-functional gate reviews where engineering, quality, safety, and certification leads confirm closure logic together.

- Benchmark new mobility technologies against both aerospace and adjacent transportation standards when architectures are novel or hybrid.

FAQ for Teams Managing Early Compliance Risk

Is Aerospace Certification standards comparison only useful for large aircraft OEMs?

No. It is equally valuable for suppliers, subsystem developers, advanced mobility startups, and specialized engineering teams. Smaller organizations often face greater risk because they have fewer resources to absorb late rework.

What is the earliest point to start?

The best time is during concept definition and initial architecture selection. Once major design assumptions are locked, changing the compliance pathway becomes far more disruptive.

Can a strong quality system alone prevent certification delays?

A strong quality system helps, but it is not enough by itself. The organization also needs accurate requirement interpretation, a credible verification strategy, controlled supplier evidence, and aligned safety documentation.

From Comparison to Readiness

The most useful Aerospace Certification standards comparison is the one that changes behavior early. It clarifies what compliance really requires, highlights where approval delays usually begin, and gives quality and safety leaders a practical basis for intervention before problems harden into program setbacks. In a market shaped by next-generation airframes, autonomous control systems, and cross-border certification expectations, disciplined comparison is no longer optional; it is a readiness tool.

For organizations operating across aerospace and advanced transportation, the next step is to turn standards insight into a repeatable control model: benchmark requirements, test assumptions, supplier evidence, and document baselines continuously. That is how compliance delays are reduced at their true point of origin—long before final approval is on the table.

Article Categories

Latest Whitepapers

- 00

0000-00

How to Compare a Space Exploration Technology Manufacturer Beyond the Spec SheetPosted by: Dr. Julian Void - 00

0000-00

High-Performance Systems Integration Solutions for Tougher Field ConditionsPosted by: Dr. Aris Aero - 00

0000-00

Urban Air Mobility Certification Requirements: The Gaps That Stall Program TimelinesPosted by: Lina Cloud

SYSTEM_ALERT_URGENT

Q3 SYMPOSIUM ON ORBITAL DYNAMICS

Registration for the Orbital Aerospace technical committee is now open. Node access required.

Recent Articles