Aerospace Engineering Certification Standards: Gaps That Trigger Rework

Lead Author

Published

Views:

Aerospace Engineering certification standards often appear complete on paper, yet hidden gaps in traceability, validation, and cross-functional review can quietly trigger costly rework. For quality and safety leaders, understanding where compliance frameworks break down is essential to protecting schedules, budgets, and airworthiness. This article examines the overlooked certification weak points that turn late-stage findings into major engineering setbacks.

What Aerospace Engineering certification standards really cover

At a practical level, Aerospace Engineering certification standards are not just a list of technical rules. They are the structured evidence system that proves a design, process, software function, material selection, manufacturing method, and maintenance assumption are acceptable under airworthiness and safety expectations. For quality control and safety management teams, the real challenge is rarely the existence of standards such as FAA, EASA, ISO, or related program requirements. The problem is whether every requirement can be traced to design intent, verified by acceptable methods, reviewed across functions, and preserved as auditable evidence.

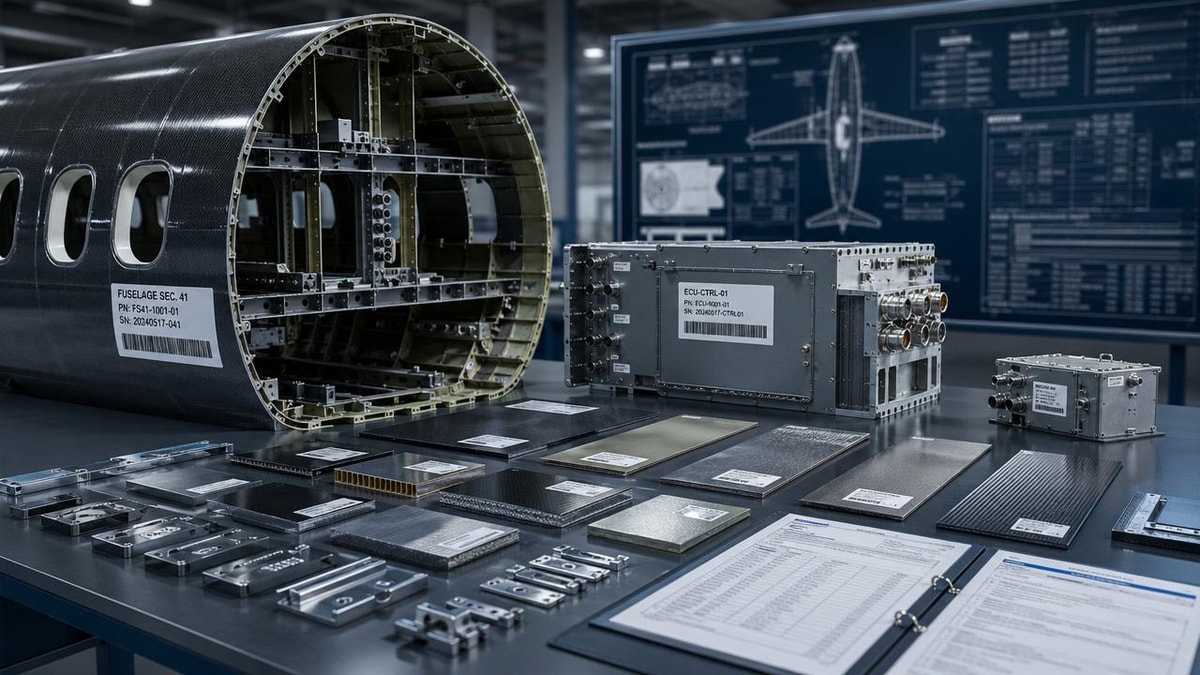

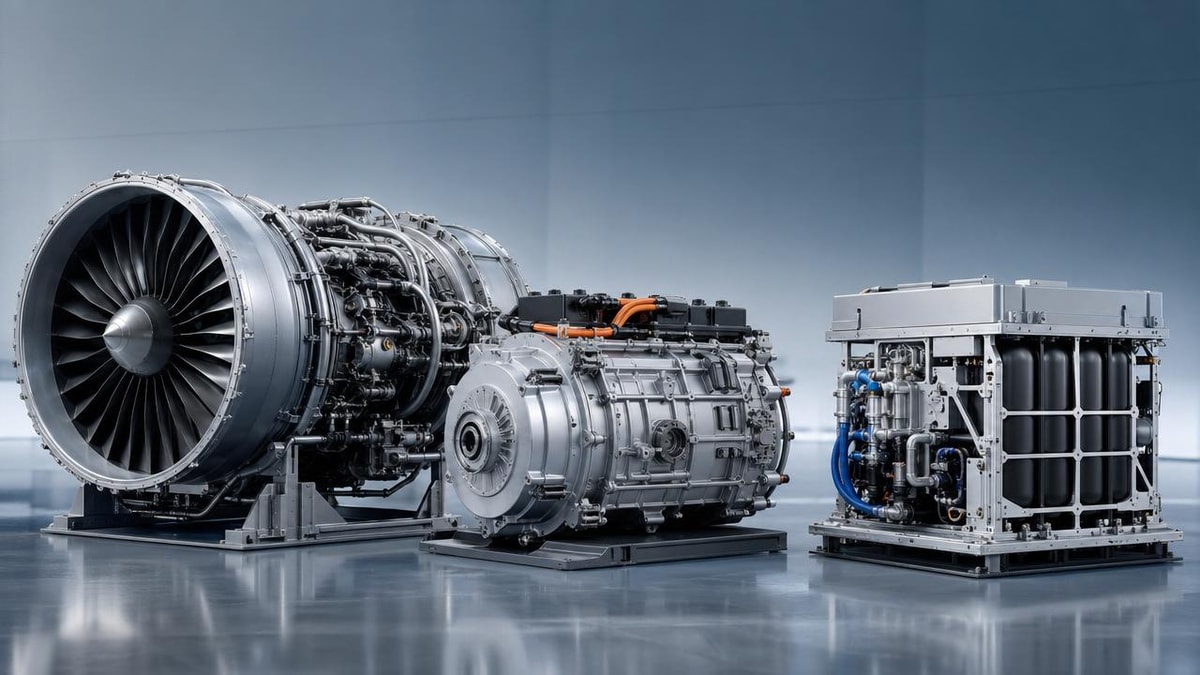

In advanced mobility sectors such as next-generation aviation, space systems, urban air mobility, and high-speed autonomous transport, certification complexity increases because technologies evolve faster than legacy compliance habits. Composite structures, electric propulsion, autonomous control logic, thermal management, battery safety, and human-machine interface functions often cross multiple regulatory domains. This is where Aerospace Engineering certification standards become operational rather than theoretical: they must connect engineering ambition with disciplined proof.

Why the industry keeps seeing rework despite mature frameworks

Many organizations assume rework starts when a regulator raises a finding late in the program. In reality, the finding is usually the final symptom of an earlier disconnect. Engineering may interpret a requirement differently from quality. Supplier documentation may not align with the certification basis. Test teams may validate performance, while safety teams still need evidence of failure containment. Program management may track milestones, but not compliance maturity. When these disconnects remain hidden, work proceeds quickly until a review exposes that evidence is incomplete, inconsistent, or unusable.

This pattern matters across the broader transportation-intelligence landscape represented by organizations such as G-AIT. Whether the platform is a composite airframe, cryogenic propulsion assembly, autonomous rail control subsystem, or eVTOL flight software stack, the same governance lesson applies: certification success depends on linking technical performance to documented compliance logic from the beginning, not at the end.

The most common gaps that trigger certification rework

For quality and safety leaders, the most damaging gaps are often small enough to escape attention during fast development cycles. Yet each one can create major schedule slips when discovered during formal review.

1. Weak requirement traceability

Aerospace Engineering certification standards require a clear path from regulation or certification basis to system requirement, design output, verification activity, and objective evidence. Rework begins when one of these links is missing. Teams may have test reports, but cannot prove which clause they satisfy. Or they may map a top-level requirement without flowing it to subsystem and supplier levels.

2. Validation that proves performance but not compliance intent

Passing a test does not always equal compliance. A structural test may show the component survives load, but not reflect the approved load case assumptions. A software simulation may demonstrate function, but not satisfy independence or tool qualification expectations. Rework occurs when verification activities are technically impressive but certification-relevant evidence is weak.

3. Late safety integration

Safety analyses such as FHA, FTA, or FMEA are sometimes treated as parallel documentation instead of design-driving inputs. When hazard controls are not embedded early, the program later discovers that architecture, redundancy, maintenance logic, or monitoring thresholds do not support the claimed safety objective.

4. Supplier evidence that is incomplete or non-standardized

Aerospace programs increasingly rely on specialized suppliers for batteries, avionics, composites, sensors, and software modules. If supplier qualification records, material pedigree, process controls, or configuration histories are inconsistent, the integrator inherits the gap. Certification rework frequently starts at these interfaces.

5. Configuration drift between design, test article, and released documentation

One of the most expensive failure modes is discovering that the tested configuration is not fully representative of the final production baseline. Even small undocumented differences in software versions, bonding processes, wiring routes, or fastener specifications can undermine certification credit.

6. Cross-functional review that happens too late

Engineering, quality, certification, manufacturing, and safety teams often meet formally at stage gates, but not consistently during requirement interpretation and evidence planning. By the time everyone reviews the same package, foundational assumptions may already be locked into drawings, procedures, and supplier contracts.

Where these gaps appear across advanced mobility programs

Although Aerospace Engineering certification standards are commonly associated with aircraft, the same compliance weaknesses appear across adjacent sectors where safety-critical performance and regulatory scrutiny intersect. The table below shows how rework risk shifts by application context.

Why this matters specifically to quality and safety leaders

Quality control personnel and safety managers are often the first to see patterns that engineering teams normalize. A missing revision reference, an unapproved test deviation, a supplier certificate with weak traceability, or a hazard control that lacks objective verification may look administrative at first. Under Aerospace Engineering certification standards, however, these are not paperwork issues. They are signals that the proof chain is fragile.

Their value to the organization is therefore strategic. By challenging evidence quality early, they reduce technical debt. By aligning process discipline with certification expectations, they protect both safety and program economics. In sectors driven by rapid innovation, this governance function becomes even more important because novel technologies attract heightened scrutiny from authorities, customers, insurers, and investors.

A practical framework for evaluating certification readiness

A useful way to assess Aerospace Engineering certification standards in practice is to review readiness across five control layers rather than relying on milestone confidence alone.

Requirement clarity

Confirm that the certification basis, means of compliance, assumptions, and acceptance criteria are explicit and shared. Ambiguity at this stage spreads through every later artifact.

Evidence planning

Check whether each requirement has an agreed proof method: analysis, test, inspection, similarity, simulation, or combined approach. If the method changes, the rationale and impact should be controlled.

Configuration integrity

Ensure that baselines for design, software, manufacturing process, and test article remain synchronized. Without this, evidence loses authority.

Cross-functional accountability

Require visible ownership across engineering, quality, safety, certification, supply chain, and operations. Gaps usually live in handoffs, not in isolated departments.

Auditability

Ask whether an internal reviewer or external authority could reconstruct the compliance story without relying on tribal knowledge. If the answer is no, rework risk remains high.

Implementation advice for reducing late-stage findings

Organizations do not reduce certification rework simply by adding more reviews. They reduce it by improving the quality, timing, and structure of reviews. Several practices consistently strengthen performance against Aerospace Engineering certification standards.

- Build a requirement-to-evidence matrix early and update it continuously, not only before audits.

- Use joint review sessions where engineering, safety, and quality evaluate assumptions together before test execution.

- Standardize supplier evidence packages for material pedigree, process control, software history, and deviation approval.

- Treat configuration management as a certification control, not only a document control function.

- Run internal “authority-style” readiness assessments that challenge logic, traceability, and evidence sufficiency.

For institutions and strategic benchmarking bodies operating across aerospace and advanced transport domains, these practices also create comparable maturity indicators. That makes it easier to evaluate whether a high-performance technology is merely innovative or truly certifiable at scale.

Closing perspective

Aerospace Engineering certification standards are most valuable when they are understood as a system of disciplined proof, not a final approval hurdle. Rework is rarely caused by a single failed test or one missing document. It is usually triggered by hidden breaks in traceability, validation logic, safety integration, supplier control, or configuration integrity. For quality and safety professionals, identifying these breaks early is one of the highest-value actions available.

If your organization is developing next-generation airframes, autonomous mobility platforms, space systems, or other safety-critical transport technologies, now is the right time to review where your compliance framework is vulnerable. Stronger evidence architecture today can prevent expensive redesign, delayed approvals, and avoidable operational risk tomorrow.

Article Categories

Latest Whitepapers

- 00

0000-00

Aerospace Engineering Certification Standards: Gaps That Trigger ReworkPosted by: Dr. Aris Aero - 00

0000-00

How to Assess a Global Mobility Solutions Supplier for Long-Term ReliabilityPosted by: Lina Cloud - 00

0000-00

Advanced Propulsion Options Compared: Efficiency, Range, and Maintenance ImpactPosted by: Dr. Aris Aero

SYSTEM_ALERT_URGENT

Q3 SYMPOSIUM ON ORBITAL DYNAMICS

Registration for the Orbital Aerospace technical committee is now open. Node access required.