What Defines Top Aerospace Engineering R&D Facilities

Lead Author

Published

Views:

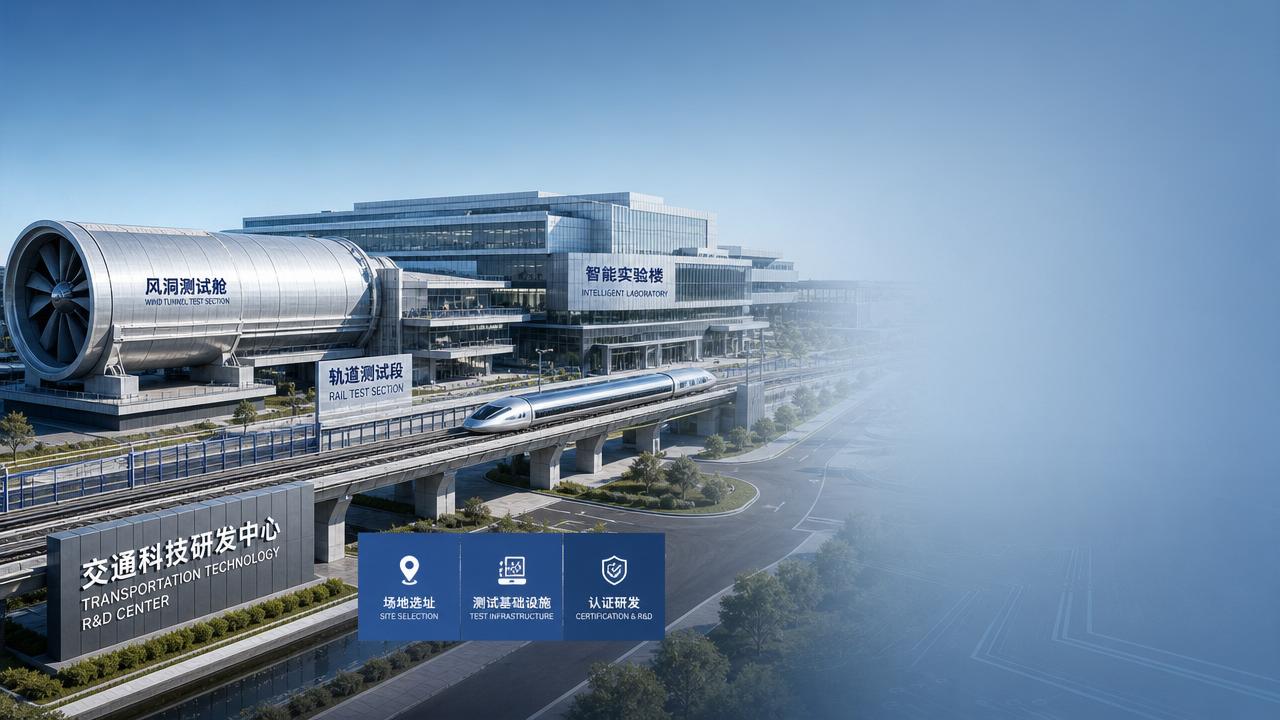

Top Aerospace Engineering R&D facilities are not defined by size alone. For technical evaluators, the real differentiators are test capability, systems integration depth, certification readiness, digital engineering maturity, and the facility’s ability to convert advanced concepts into repeatable, airworthy, and certifiable outcomes.

When organizations assess Aerospace Engineering R&D facilities, they are usually not asking which site looks most advanced on paper. They want to know which environments can reduce technical uncertainty, support safe development, and accelerate decisions across design, validation, compliance, and program scale-up.

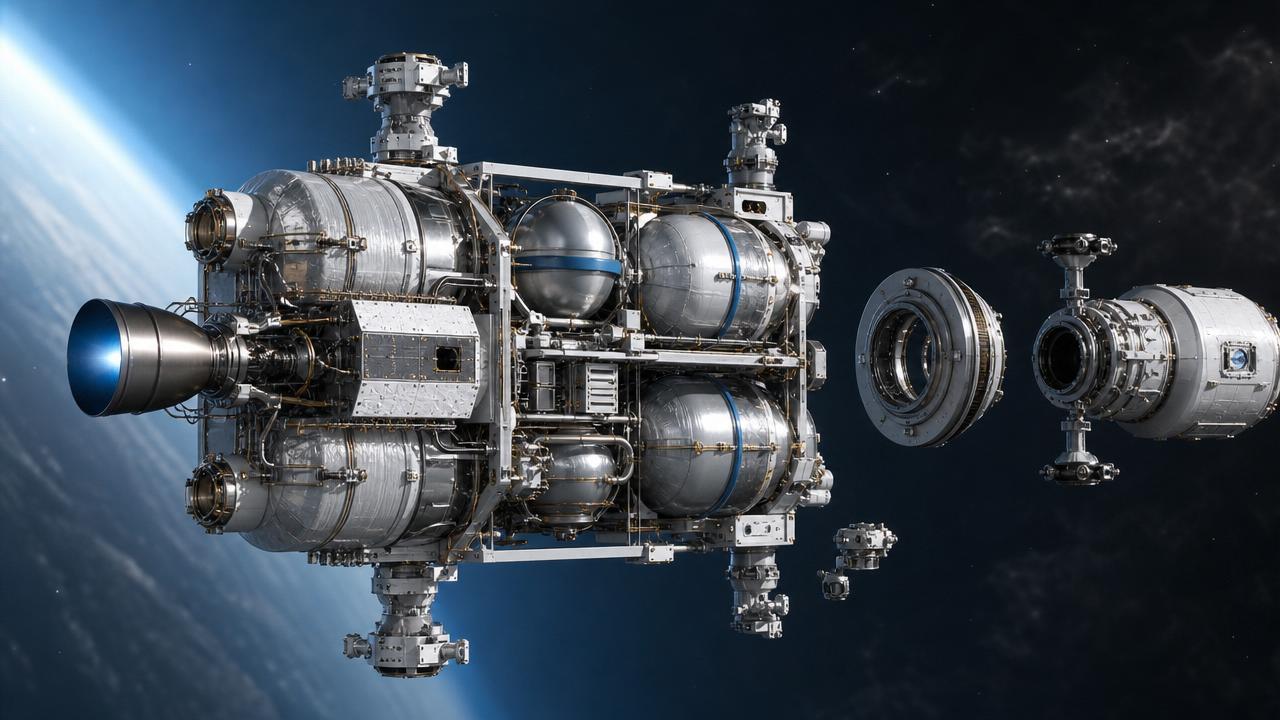

That is why the best facilities combine hardware, software, talent, process discipline, and regulatory alignment into a single development ecosystem. A top site can test propulsion, structures, avionics, autonomy, materials, and manufacturing readiness without losing traceability between concept, evidence, and certification expectations.

What technical evaluators are really looking for in Aerospace Engineering R&D facilities

The core search intent behind this topic is practical evaluation. Readers want a reliable way to distinguish world-class Aerospace Engineering R&D facilities from well-funded but less capable sites that cannot consistently de-risk complex aerospace programs.

For technical assessment teams, the most important questions are straightforward. Can the facility generate credible test data? Can it support multidisciplinary development? Can it align with FAA, EASA, military, or space qualification frameworks? Can it move from prototype learning to certification-grade evidence?

These questions matter because aerospace development is unforgiving. A facility that excels in early experimentation but lacks validation discipline can create expensive delays later. Likewise, a site with compliance expertise but weak prototyping capability may slow innovation before the most valuable design options are explored.

The strongest facilities create a continuum from early research to late-stage verification. That continuum is often what separates isolated labs from true strategic R&D assets. Evaluators should therefore judge facilities as integrated systems rather than as collections of expensive equipment.

Advanced test infrastructure is the first non-negotiable requirement

At the highest level, top Aerospace Engineering R&D facilities are defined by the quality, relevance, and accessibility of their testing infrastructure. Engineering decisions in aerospace must be evidence-based, and evidence depends on whether testing environments match real operating conditions closely enough.

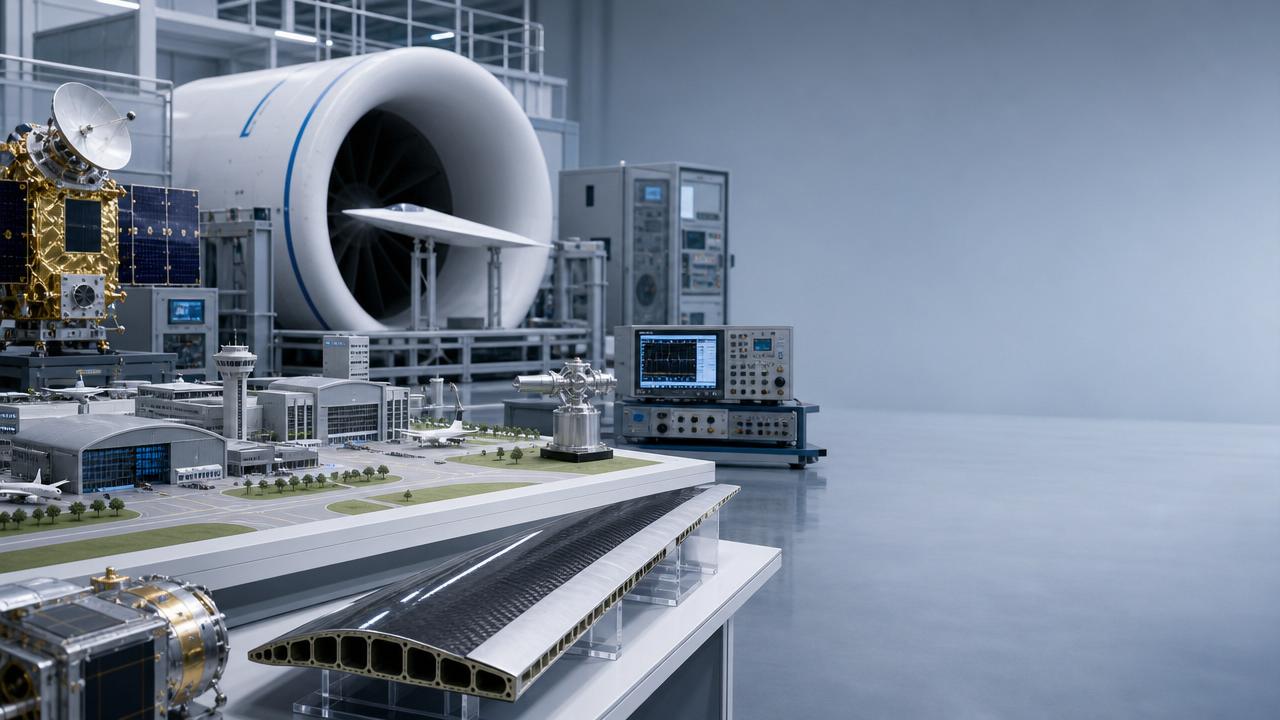

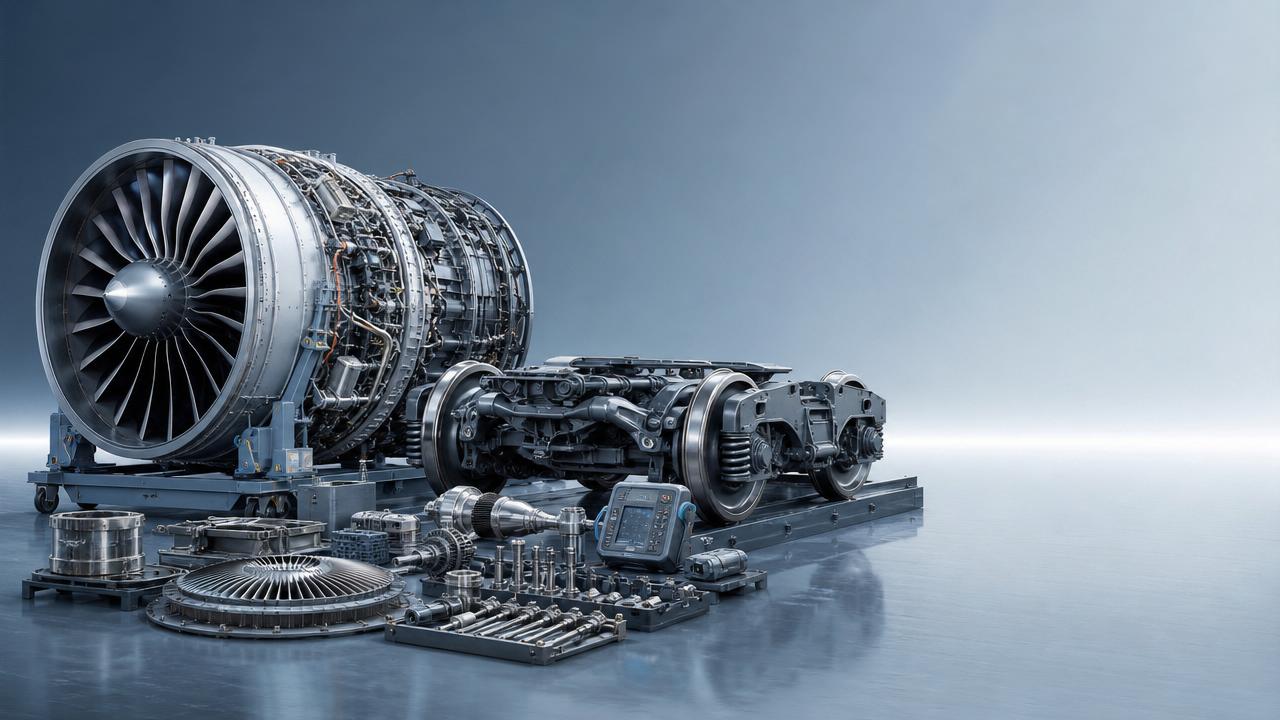

For airframe programs, this includes structural test rigs, fatigue and damage-tolerance laboratories, environmental chambers, vibration and acoustic testing, wind tunnel access, and instrumentation systems that produce traceable, high-resolution data. A facility without such capabilities may still innovate, but it cannot validate at the level most programs require.

For propulsion and thermal systems, the bar is even higher. Leading sites support combustion testing, cryogenic handling, thermal cycling, pressure testing, failure-mode analysis, and increasingly, electrified propulsion validation. In emerging mobility segments, battery abuse testing and high-voltage safety capability are now essential, not optional.

Evaluators should also examine throughput and configuration flexibility. A facility may own impressive equipment, yet become a bottleneck because setup times are long, instrumentation workflows are fragmented, or test articles cannot be rapidly reconfigured. Top facilities support both precision and iteration speed.

Another key marker is whether physical test assets are connected to digital models. When structural, aerodynamic, propulsion, and controls data are continuously used to update simulations, teams can detect discrepancies early and reduce costly redesign loops. Test infrastructure becomes much more valuable when it is embedded in a digital feedback system.

Cross-disciplinary integration matters more than isolated excellence

Aerospace systems fail at interfaces more often than in isolated components. That is why top Aerospace Engineering R&D facilities are built around systems integration, not just discipline-specific excellence. A great propulsion lab alone does not define a top facility if controls, thermal management, software, and structural interfaces are weakly coordinated.

Technical evaluators should ask how effectively mechanical, electrical, software, materials, manufacturing, and certification teams work together. In advanced aerospace programs, the competitive advantage often comes from how quickly a facility can identify tradeoffs across disciplines and close them with evidence.

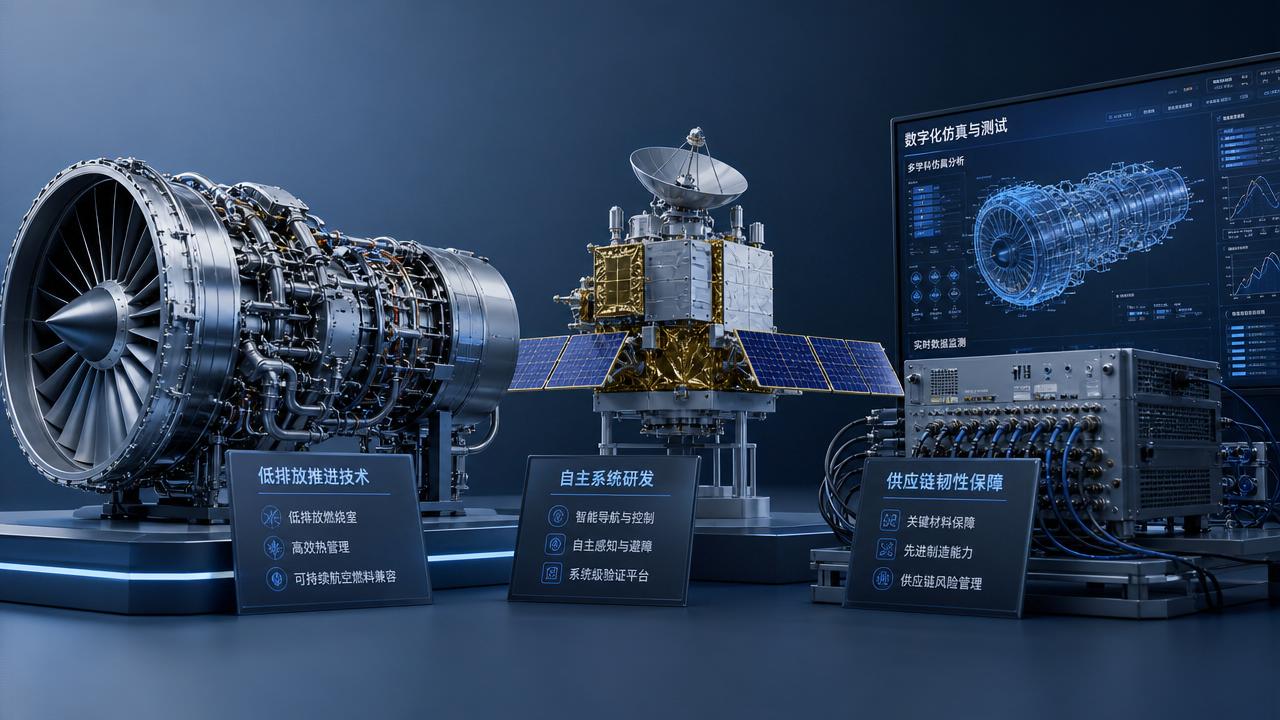

This is particularly important in programs such as eVTOL, autonomous aircraft, reusable launch systems, and high-speed transport platforms. In these domains, propulsion architecture affects thermal loads, which affect materials, which affect manufacturing choices, which affect maintainability, which ultimately affect certification and economics.

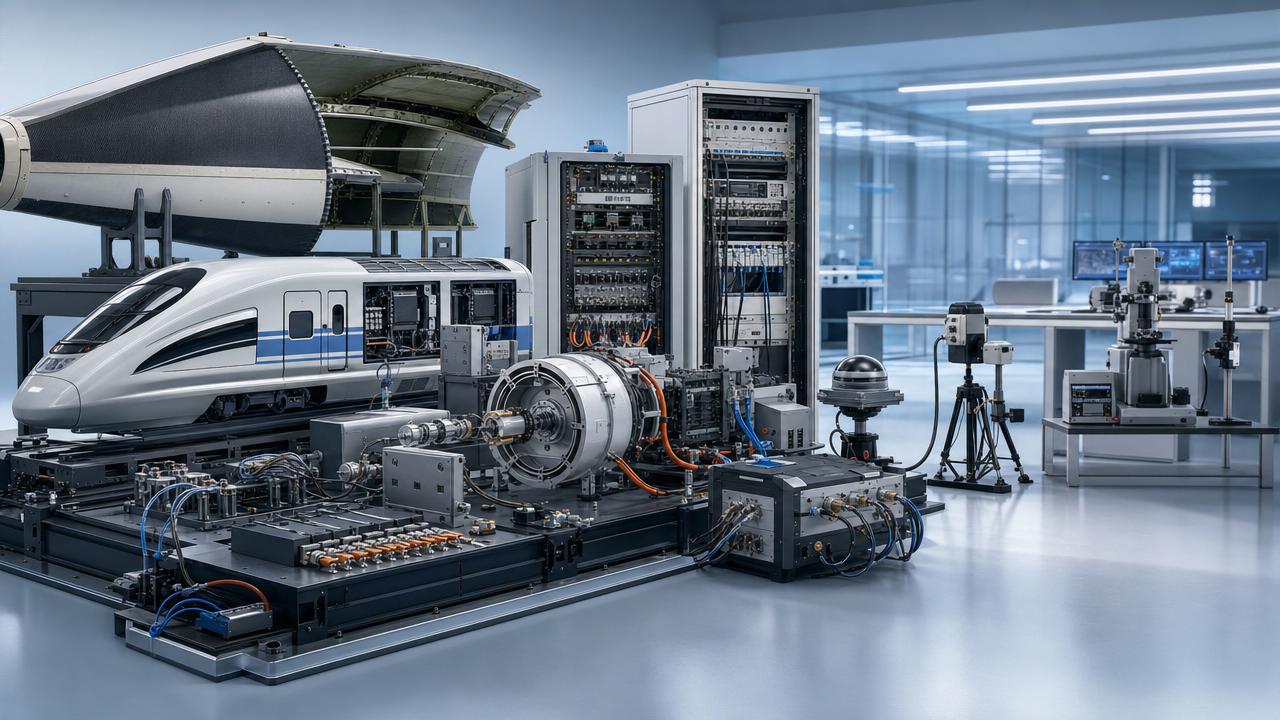

The best facilities are organized to make these interactions visible early. They often use integrated program review structures, model-based systems engineering, digital twins, hardware-in-the-loop testing, and shared requirements management tools. Those mechanisms reduce the risk that teams optimize local subsystems while degrading total platform performance.

Evaluators should also observe whether integration happens only at milestone reviews or continuously during development. Facilities that enable daily interaction between design, test, software, and compliance groups usually outperform organizations where handoffs are formal, slow, and siloed.

Certification readiness separates research capability from deployable capability

Many facilities can produce breakthroughs. Far fewer can turn those breakthroughs into certifiable aerospace systems. For technical evaluators, this is one of the most important distinctions when benchmarking Aerospace Engineering R&D facilities.

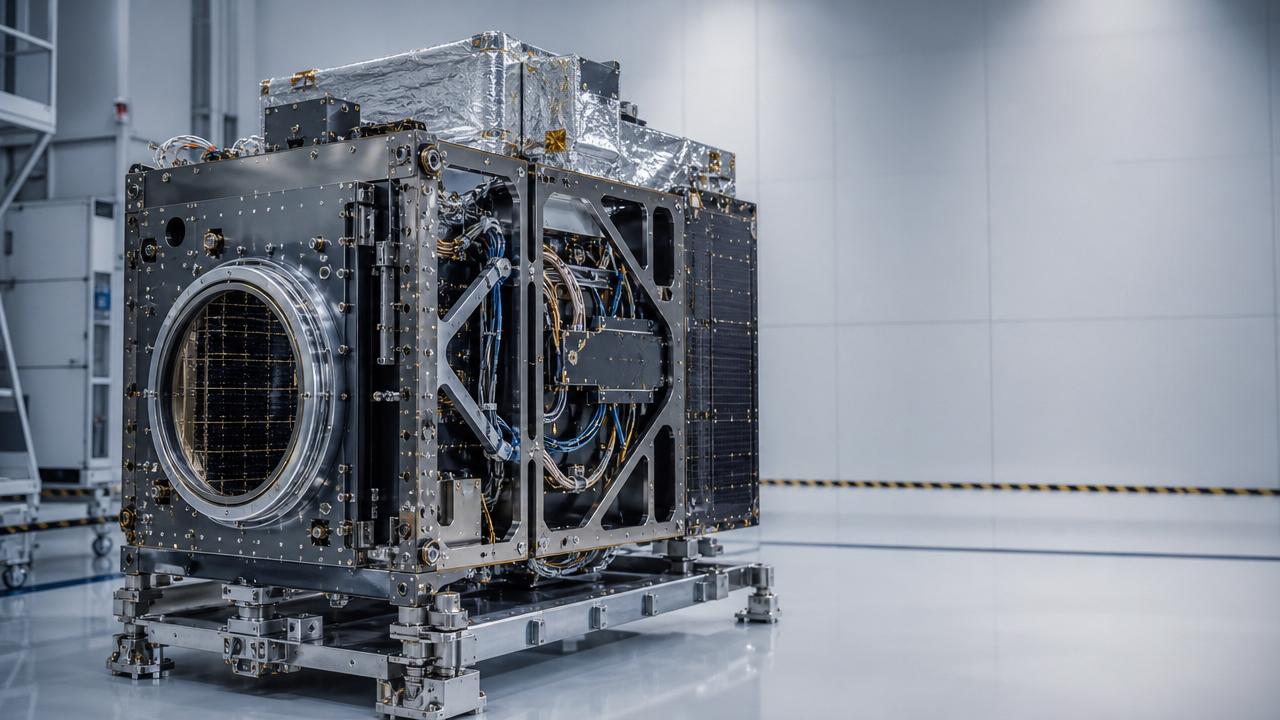

Certification readiness means more than familiarity with standards. It means the facility’s processes, documentation habits, verification methods, configuration control, and quality systems are structured to generate evidence acceptable to regulators, customers, and prime contractors.

In practical terms, evaluators should look for traceability between requirements, design changes, test procedures, results, anomalies, and corrective actions. If a facility cannot maintain that chain cleanly, it may produce useful research but struggle to support type certification, airworthiness approvals, or mission assurance reviews.

Facilities working at the highest level also understand the difference between exploratory testing and qualification testing. They know when speed is appropriate, when rigor must increase, and how to transition from one mode to the other without losing continuity in data and engineering logic.

For organizations operating globally, alignment with FAA, EASA, NASA, ESA, DoD, ISO, and other relevant frameworks can be a major differentiator. A facility that understands multiple regulatory environments can support product families, international partnerships, and future market expansion with less reinvention.

Digital engineering maturity is now a defining benchmark

Modern Aerospace Engineering R&D facilities are increasingly evaluated by how well they combine physical development with digital engineering. This includes simulation environments, model-based systems engineering, real-time telemetry pipelines, configuration management, and digital thread continuity across the product lifecycle.

A strong digital backbone improves more than productivity. It strengthens decision quality. When design teams, test engineers, manufacturing specialists, and certification staff work from synchronized data structures, they detect requirement conflicts faster and make evidence-based tradeoffs earlier.

Technical evaluators should therefore assess whether the facility can support closed-loop development. For example, can aerodynamic test data update simulation models rapidly? Can software behavior be validated in hardware-in-the-loop environments before expensive flight testing? Can manufacturing deviations be traced back to design assumptions?

The most advanced facilities use digital engineering to compress learning cycles rather than simply to create polished dashboards. Their tools support predictive maintenance of test assets, automated anomaly detection, design space exploration, and rapid comparison between simulated and observed performance.

In sectors such as advanced aviation, space systems, and autonomous mobility, digital maturity also supports safer experimentation. Teams can eliminate weaker concepts in simulation before committing scarce prototype resources. That efficiency becomes especially valuable in capital-intensive programs with long certification horizons.

Talent architecture and institutional knowledge are strategic assets

Even the best equipment does not guarantee strong outcomes. Top Aerospace Engineering R&D facilities are defined by the quality of their people, how those people collaborate, and how knowledge is retained across programs. For evaluators, talent architecture is as important as infrastructure.

The strongest facilities bring together specialists in aerodynamics, structures, flight controls, embedded systems, propulsion, safety engineering, certification, manufacturing, and data analysis. But the real advantage appears when these experts share a common decision framework and can translate between disciplines effectively.

Evaluators should pay attention to program leadership structure, not just resumes. Are technical decisions escalated efficiently? Is there a clear chief engineer function? Are test anomalies resolved through disciplined root-cause analysis? Does the organization preserve lessons learned or repeatedly rediscover them?

High-performing facilities also invest in technician capability, instrumentation expertise, and test operations discipline. In aerospace, execution quality at the lab and rig level often determines whether data are useful, repeatable, and certifiable. This operational layer is sometimes overlooked in superficial evaluations.

Knowledge continuity is another major factor. Facilities with strong documentation, reusable design frameworks, validated methods, and institutional memory can scale programs more effectively than organizations dependent on a small number of irreplaceable experts.

Manufacturing readiness and prototype transition capability drive real value

One common weakness in R&D environments is the gap between engineering proof and manufacturable reality. Top Aerospace Engineering R&D facilities reduce that gap early by integrating prototyping, producibility assessment, and industrialization thinking into research workflows.

This does not mean every facility needs full-rate production capability. It does mean the facility should understand tooling constraints, material variability, inspection demands, supply chain realities, and how design choices affect assembly, maintenance, and lifecycle cost.

For technical evaluators, a critical question is whether prototypes are built only to demonstrate performance or also to expose manufacturing risk. Facilities that test assembly sequence, tolerance stack-up, repair access, and inspection methods early tend to reduce surprises later in development.

In advanced composites, additive manufacturing, high-temperature materials, and electrified propulsion systems, this capability is particularly important. Novel designs often fail commercially not because the physics are weak, but because production repeatability, quality assurance, or maintainability were underdeveloped during R&D.

Facilities that can bridge laboratory innovation and industrial deployment offer much greater strategic value. They help organizations make better investment decisions by showing not only whether a concept works, but whether it can be built, certified, maintained, and scaled responsibly.

How technical evaluators can assess a facility with confidence

A practical evaluation framework should balance capability, credibility, and fit-for-purpose relevance. The goal is not to find a universally perfect site, but to determine whether a facility is strong enough for the specific aerospace mission, risk profile, and maturity stage under review.

Start with infrastructure relevance. Confirm that the facility’s test assets match the operating environment, load cases, propulsion architecture, software complexity, and certification path of the intended program. Generic capability statements are not enough; evaluators need program-relevant evidence.

Next, review integration maturity. Ask how data flow between design, simulation, test, quality, and compliance functions. Look for examples of multidisciplinary issue resolution. Facilities that can demonstrate fast, traceable learning loops are usually more capable than those relying on fragmented workflows.

Then assess governance and quality discipline. Review documentation practices, anomaly management, calibration control, cybersecurity posture, and configuration management. These factors may seem secondary during early innovation, but they strongly influence whether results can support major downstream decisions.

Finally, test the facility’s transition capability. Ask for proof that it has supported technology maturation beyond concept demonstration. Evidence of successful movement into qualification, certification, pilot production, or operational deployment is often the clearest sign of real R&D strength.

Conclusion: the best facilities turn complexity into certifiable progress

What defines top Aerospace Engineering R&D facilities is not prestige, building size, or capital expenditure alone. The defining standard is whether the facility can convert technical complexity into validated, traceable, and deployable progress across the full development pathway.

For technical evaluators, the most valuable facilities are those that combine advanced testing infrastructure, systems integration capability, certification discipline, digital engineering maturity, strong talent architecture, and prototype-to-production awareness. These factors create confidence that innovation will survive contact with regulation, manufacturing, and real-world operations.

In aerospace and advanced mobility, that confidence is a strategic advantage. Organizations that choose their R&D environments carefully are better positioned to reduce development risk, accelerate decision cycles, and deliver safer, more competitive systems to market.

Article Categories

SYSTEM_ALERT_URGENT

Q3 SYMPOSIUM ON ORBITAL DYNAMICS

Registration for the Orbital Aerospace technical committee is now open. Node access required.